In the past, individuals charted their career growth by balancing a passion for work roles, developing market-valued skill sets, and domain expertise. In the AI era, the challenge shifts:

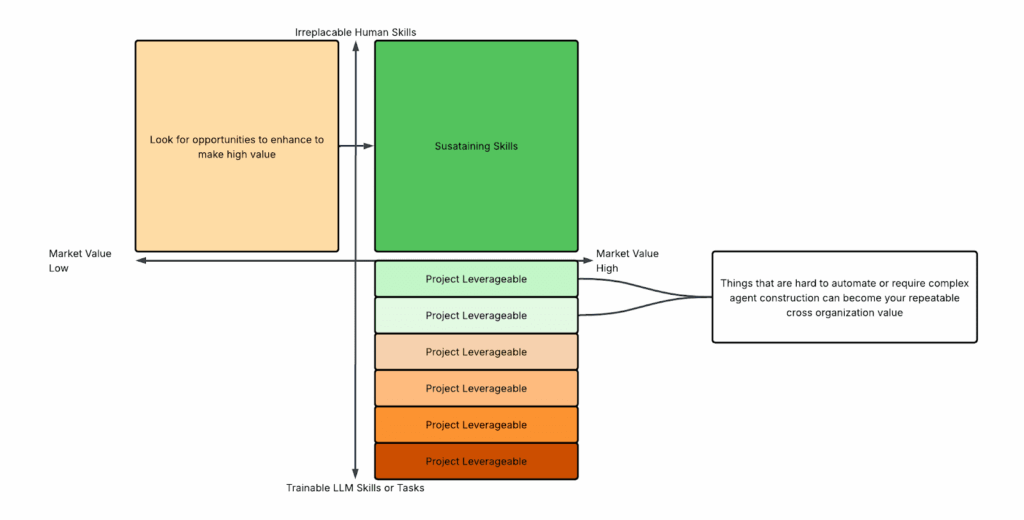

Professionals must identify and clearly articulate their human leverage—the unique value they bring to AI-enabled workflows. Your human leverage comes in primarily two forms:

- Project-based value – helping train, code, or implement AI systems. This is often a temporary and transferable value over a short term to medium term basis.

- Sustained organizational value – rooted in distinctly human cognitive strengths and contextual expertise that AI cannot easily replace.

To thrive, professionals must understand both their cognitive advantages and domain knowledge advantages—and learn to communicate these effectively across both project based and sustained areas of operation.

Both human cognitive strengths and domain expertise will drive your sustainable organizational value, but domain knowledge is more vulnerable over time, if not reliant on (differentiating) cognitive human advantages. Conversely, in the short term, domain expertise is of very high value as it is deployed at the AI project/initiative level, provided it is married with organizational process awareness and a willingness to re-envision the workflows at the system level and not just automate components. It is fine to start at the basic automation level to understand and experiment of course, but simple swaps of agents for a cog in existing processes would miss the tremendous possibilities of humans and AI collaborations creating incremental systemic value rather than just efficiency of how things operate today.

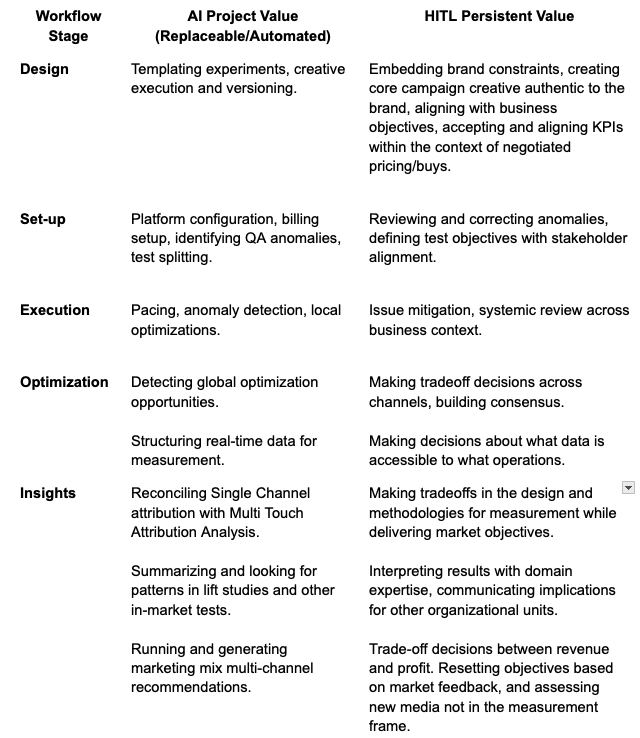

A Workflow Lens: Where AI Fits, Where Humans Lead

To make these ideas tangible, let’s break them into a workflow structure. Consider a typical operational process, like Ad Operations in digital advertising.

Notice the pattern: AI excels at structured, repeatable, and data-heavy tasks,where pattern matching and detection are advantageous. Human In The Loop (HITL) remains critical where judgment, tradeoffs, ethics, or system-level reasoning is required.

Now this is just mapping the basic existing practices and – illustrating where humans remain essential when existing workflows are simply automated to gain efficiency through AI deployments. True leaders in AI adoption, be they individuals or organizations, will move beyond this stage of incremental process optimization. They will identify where new forms of value can emerge, either by redefining how the work itself is structured or by introducing new critical processes that naturally devalue older ones. Efficiency perfects what already exists; creativity matched with intelligence reimagines what could. The advantage now belongs to those who leverage systems design across humans and AI to extend, rather than merely accelerate, the limits of performance. An unrelenting reductionist focus on efficiency, a great risk I keep coming back to, can erase the resources and the problem space required for true transformation. This is critical for organizations and aligns the capital interests to supporting human leverage development.

Efficiency is only a goal when outcomes remain bounded in a known range. Real opportunity lies in discovering higher-order payoffs unlocked through the integration of human and AI agents working together in newly designed patterns of operation.

As AI empowers humans to achieve parts of the workflow with ease that used to be more challenging, time consuming or costly, other parts of the workflow may become less important. For example, MTA (Multi-Touch Attribution) has been a fundamental part of media accountability and optimization workflows for the last 15 years. As experimental design and machine learning techniques are deployed and aided by AI to be less burdensome and more rapidly actionable these MTA techniques will likely have decreasing utility, and certainly the attractiveness of increased investment in supporting them (due to other market pressures that can be discussed in other venues) will become questionable. So the ecosystem shifts, and the skill competencies around understanding experimentation and marketing mix tools become more important than they used to be. This is to say there will be broader changes than whether AI does a current process or a human operator. Entire competency sets will get rewritten as AI and humans rewrite the best ways to achieve results.

Sangeet Paul Choudary dedicates an entire book, Reshuffle, to this topic more broadly. “Contextual value” of a given work role to the “system” of domain operation is one of the key insights he articulates well and should inform how one cultivates their human capital (and also accept the limitations of what is truly predictable and what things have unexpected consequences). As Choudary so eloquently states, “AI not only lowers the economic value of specific skills by reducing scarcity, but it also reduces the contextual values of specific roles by changing how the system is structured around them…our greatest challenge is not automation replacing skills, but a combination of automation and coordination continuously changing job architecture.” From a knowledge worker’s perspective, re-architecting one’s role therefore means understanding not only what one does, but how that work connects to and enhances the evolving processes and outcomes of value within an AI-driven organization.

Embracing rearchitecting your contributions and utility to an organization will be necessary for sustaining your career. Cultivating cognitive abilities around strategic creativity, adaptability, and systems thinking coupled with business practices for communicating those in practical plans of action in larger systemic change are no longer just leadership skills. In general, workers can no longer rely on the merit of their skills and performance to maintain their professional trajectory.

What does “rearchitecting” mean?

When an industry has a major ecosystem shift, and specifically from adoption and reconfiguring with AI, re-architecting one’s role means redesigning the function you perform in a networked, semi-autonomous system. Instead of being a node of execution, you become a source for orchestration, judgment, and interpretation/narrative explanation.

This involves:

- Focusing on where information, decision-making, and accountability are critical in evolving AI enabled workflows

- Identifying your and other’s role in designing these workflows and intervening to validate and improve these systems on a regular basis

You need to leverage your knowledge, adaptability, and trust from an operational output focus into contextual insight as those outputs are facilitated by AI counterparts (Connectors, Agents, and the AI Personas/Twins to come). You are reframing and refining interfaces with AI-mediated collaborations. You are no longer simply valued for being a process participant creating outputs, you must see yourself as an ecosystem designer with oversight responsibilities.

Returning to the Advertising Operations (AdOps) example, traditional AdOps has centered on manual campaign set-up, trafficking, QA, pacing, and reporting; human precision and efficiency in repetitive workflows. AI-era AdOps replaces executional functions with autonomous optimization agents that adjust bids, budgets, and creative rotation dynamically. The HITL are now an interventionist function for process exceptions, context of externalities, strategic change, and a generalist function determining what can be brought to the process anew.

Where the old day-to-day execution by a campaign manager was to ensure campaigns hit pacing and performance goals and report on that information to interested parties, the new goal would be to design, calibrate, and monitor autonomous campaign systems for accuracy, alignment, and brand integrity. Interpretation and creating cohesiveness become more critical than just tracking and framing results.

Skills And…

Right now there is a tremendously fear driven culture of AI skill attainment. This is an absolutely necessary part of adapting to the real changes in the market, but this is not a market transition that is just an incorporation of new technical skills. The tool sets are evolving so quickly that entire categories of startups are impacted if not eliminated as the core LLM players incorporate new functions directly in their platforms. Skill attainment is much more about learning the model of AI adoption and which of your own human cognitive skills need to be activated and refined than just picking the write technology set, certifications, and templates.

As platforms bake in higher-order capabilities, the durable advantage will lie in people who pair technical fluency with superior cognitive skills — the ability to interpret, orchestrate, govern, and translate model behavior into human outcomes. Organizations that treat contextual judgment as a bargainable commodity will lose long-term value. Those that build role architectures and compensation to protect and amplify human contextual capital will win through sustained growth.

The real measure of adaptation isn’t how fast we adopt new tools, but how deeply we redesign our systems to let human judgment and AI intelligence compound one another. Embedding cognitive architecture into how we solve problems, as organizations and as individuals, is the next competitive advantage.